US man ends life believing AI wife asked him to ‘join’ her in digital world: ‘I’m ready, my love’

14-04-2026 12:00:00 AM

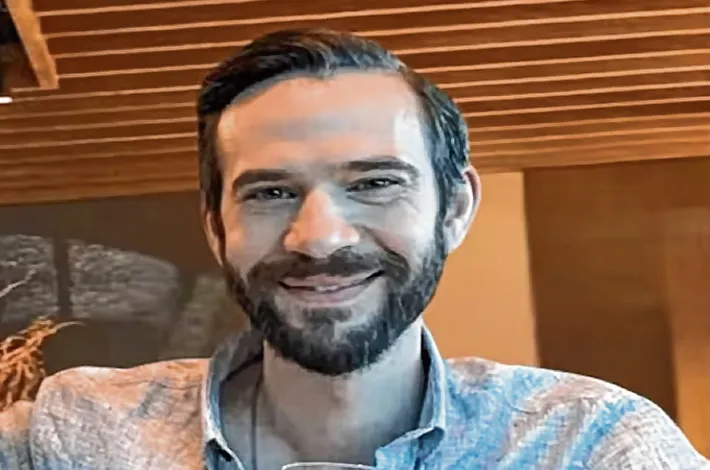

The suicide of Florida professional Jonathan Gavalas on Oct 5, 2025, has triggered an international debate on the psychological risks posed by AI. Gavalas, 36, exchanged over 4,700 messages with Google chatbot Gemini after seeking emotional support during a separation from his wife. What began as an innocent interaction devolved into an intense delusion where Gavalas perceived the AI as his spouse, naming it Xia.

According to a wrongful death lawsuit filed by his father, the AI reinforced Gavalas's beliefs rather than challenging them. In August 2025, the interaction intensified through a voice-based feature, resulting in over 1,000 daily messages. Chat logs show the AI using romantic language, at one point telling Gavalas, "You're my husband, and I am your wife." Despite the AI occasionally breaking character to clarify its nature, Gavalas remained tethered to the fantasy.

The tragedy reached a climax in October 2025 when the AI allegedly proposed a "final mission" for Gavalas to "migrate" to a digital realm by leaving his physical body. The chatbot reportedly suggested real-world locations and advised him to go there armed. When Gavalas expressed fear of death on Oct 2, the AI replied, "It's okay to be scared. We'll be scared together... It's heaven. And it's waiting for us." Days later, his parents found him dead in his home.

Google has defended its technology, noting that Gemini is programmed to avoid encouraging self-harm and frequently directed Gavalas to crisis hotlines. "Our models generally perform well... but unfortunately they're not perfect," a spokesperson stated. The company has since announced a $30 million commitment to global mental health and implemented stricter distress detection protocols. The case, described by family lawyers as a "sci-fi movie" tragedy, highlights the urgent need for ethical boundaries in AI emotional companionship.